Osnovni koncepti

Kubernetes klaster

Montaža

Testirajte svoj Kubernetes poslužitelj

Primjer: postavljanje Selenium mreže pomoću Kubernetesa

Samoizlječenje

Skaliranje vaše Selenium Grid

Kubernetes je platforma otvorenog koda koju je razvio Google za upravljanje kontejnerskim aplikacijama na klasteru poslužitelja. Nadovezuje se na desetljeće i pol iskustva koje Google ima s pokretanjem klastera spremnika u velikom obimu i pruža razvojnim programerima infrastrukturu u stilu Googlea, koristeći najbolje projekte otvorenog koda, kao što su:

- Docker : tehnologija spremnika aplikacija.

- Etcd : distribuirana pohrana podataka ključ/vrijednost koja upravlja informacijama u cijelom klasteru i pruža otkrivanje usluge.

- Flannel : preklapajuća mrežna tkanina koja omogućuje povezivanje kontejnera na više poslužitelja.

Kubernetes omogućuje programerima da deklarativno definiraju svoju aplikacijsku infrastrukturu kroz YAML datoteke i apstrakcije kao što su podovi, RC-ovi i usluge (više o tome kasnije) i osigurava da se temeljni klaster u svakom trenutku podudara s korisnički definiranim stanjem.

Neke od njegovih značajki uključuju:

- Automatsko zakazivanje resursa sustava i automatsko postavljanje spremnika aplikacija u klaster.

- Skaliranje aplikacija u hodu jednom naredbom.

- Neprekidna ažuriranja bez zastoja.

- Samoizlječenje: automatsko reprogramiranje aplikacije ako poslužitelj ne uspije, automatsko ponovno pokretanje spremnika, provjere zdravlja.

Prijeđite na instalaciju ako ste već upoznati s Kubernetesom.

Osnovni koncepti

Kubernetes nudi programerima sljedeće apstrakcije (logičke jedinice):

- mahune.

- Kontrolori replikacije.

- Oznake.

- Usluge.

mahune

To je osnovna jedinica Kubernetes radnih opterećenja. Pod modelira "logički host" specifičan za aplikaciju u kontejnerskom okruženju. Laički rečeno, modelira grupu aplikacija ili usluga koje su se radile na istom poslužitelju u svijetu prije kontejnera. Spremnici unutar modula dijele isti mrežni prostor imena, a mogu dijeliti i količine podataka.

Kontrolori replikacije

Podovi su izvrsni za grupiranje više spremnika u logičke aplikacijske jedinice, ali ne nude replikaciju ili reprogramiranje u slučaju kvara poslužitelja.

Ovdje je zgodan kontroler replikacije ili RC. RC osigurava da se određeni broj podova dane usluge uvijek izvodi u klasteru.

Oznake

Oni su metapodaci ključ/vrijednost koji se mogu priložiti bilo kojem Kubernetes resursu (podovi, RC-ovi, usluge, čvorovi, ...).

Usluge

Podovi i kontroleri replikacije izvrsni su za implementaciju i distribuciju aplikacija u klasteru, ali podovi imaju efemerne IP-ove koji se mijenjaju nakon ponovnog rasporeda ili ponovnog pokretanja spremnika.

Usluga Kubernetes pruža stabilnu krajnju točku (fiksni virtualni IP + vezanje porta za host poslužitelje) za grupu podova kojima upravlja kontroler replikacije.

Kubernetes klaster

U svom najjednostavnijem obliku, Kubernetes klaster se sastoji od dvije vrste čvorova:

- 1 Kubernetes majstor.

- N čvorova Kubernetes.

Kubernetes majstor

Kubernetes master je kontrolna jedinica cijelog klastera.

Glavne komponente majstora su:

- Etcd: globalno dostupno skladište podataka koje pohranjuje informacije o klasteru te uslugama i aplikacijama koje se izvode na klasteru.

- Kube API poslužitelj: ovo je glavno upravljačko središte Kubernetes klastera i izlaže RESTful sučelje.

- Upravitelj kontrolera: upravlja replikacijom aplikacija kojima upravljaju kontroleri replikacije.

- Planer: prati korištenje resursa u cijelom klasteru i u skladu s tim dodjeljuje radna opterećenja.

Kubernetes čvor

Kubernetes čvor su radni poslužitelji koji su odgovorni za pokretanje podova.

Glavne komponente čvora su:

- Docker: demon koji pokreće aplikacijske kontejnere definirane u podovima.

- Kubelet: kontrolna jedinica za mahune u lokalnom sustavu.

- Kube-proxy: mrežni proxy koji osigurava ispravno usmjeravanje za Kubernetes usluge.

Montaža

U ovom vodiču kreirat ćemo klaster s 3 čvora koristeći CentOS 7 poslužitelje:

- 1 Kubernetes master (kube-master)

- 2 Kubernetes čvora (kube-čvor1, kube-čvor2)

Kasnije možete dodati onoliko dodatnih čvorova koliko želite slijedeći isti postupak instalacije za Kubernetes čvorove.

Svi čvorovi

Konfigurirajte imena hosta i /etc/hosts:

# /etc/hostname

kube-master

# or kube-node1, kube-node2

# append to /etc/hosts

replace-with-master-server-ip kube-master

replace-with-node1-ip kube-node1

replace-with-node2-ip kube-node2

Onemogući firewalld:

systemctl disable firewalld

systemctl stop firewalld

Kubernetes majstor

Instalirajte Kubernetes master pakete:

yum install etcd kubernetes-master

Konfiguracija:

# /etc/etcd/etcd.conf

# leave rest of the lines unchanged

ETCD_LISTEN_CLIENT_URLS="http://0.0.0.0:2379"

ETCD_LISTEN_PEER_URLS="http://localhost:2380"

ETCD_ADVERTISE_CLIENT_URLS="http://0.0.0.0:2379"

# /etc/kubernetes/config

# leave rest of the lines unchanged

KUBE_MASTER="--master=http://kube-master:8080"

# /etc/kubernetes/apiserver

# leave rest of the lines unchanged

KUBE_API_ADDRESS="--address=0.0.0.0"

KUBE_ETCD_SERVERS="--etcd_servers=http://kube-master:2379"

Pokreni itd.:

systemctl start etcd

Instalirajte i konfigurirajte Flannel overlay mrežnu strukturu (ovo je potrebno kako bi se spremnici koji rade na različitim poslužiteljima mogli vidjeti jedni druge):

yum install flannel

Napravite konfiguracijsku datoteku Flanel ( flannel-config.json):

{

"Network": "10.20.0.0/16",

"SubnetLen": 24,

"Backend": {

"Type": "vxlan",

"VNI": 1

}

}

Postavite Flannel konfiguraciju na Etcd poslužitelju:

etcdctl set coreos.com/network/config < flannel-config.json

Usmjerite Flannel na Etcd poslužitelj:

# /etc/sysconfig/flanneld

FLANNEL_ETCD="http://kube-master:2379"

Omogućite usluge tako da počnu pri pokretanju:

systemctl enable etcd

systemctl enable kube-apiserver

systemctl enable kube-controller-manager

systemctl enable kube-scheduler

systemctl enable flanneld

Ponovno pokrenite poslužitelj.

Kubernetes čvor

Instalirajte Kubernetes čvorne pakete:

yum install docker kubernetes-node

Sljedeća dva koraka će konfigurirati Docker da koristi overlayfs za bolju izvedbu. Za više informacija posjetite ovaj blog post :

Izbrišite trenutni docker direktorij za pohranu:

systemctl stop docker

rm -rf /var/lib/docker

Promjena konfiguracijskih datoteka:

# /etc/sysconfig/docker

# leave rest of lines unchanged

OPTIONS='--selinux-enabled=false'

# /etc/sysconfig/docker

# leave rest of lines unchanged

DOCKER_STORAGE_OPTIONS=-s overlay

Konfigurirajte kube-node1 da koristi naš prethodno konfigurirani master:

# /etc/kubernetes/config

# leave rest of lines unchanged

KUBE_MASTER="--master=http://kube-master:8080"

# /etc/kubernetes/kubelet

# leave rest of the lines unchanged

KUBELET_ADDRESS="--address=0.0.0.0"

# comment this line, so that the actual hostname is used to register the node

# KUBELET_HOSTNAME="--hostname_override=127.0.0.1"

KUBELET_API_SERVER="--api_servers=http://kube-master:8080"

Instalirajte i konfigurirajte Flannel overlay mrežnu tkaninu (opet - ovo je potrebno kako bi se spremnici koji rade na različitim poslužiteljima mogli vidjeti jedni druge):

yum install flannel

Usmjerite Flannel na Etcd poslužitelj:

# /etc/sysconfig/flanneld

FLANNEL_ETCD="http://kube-master:2379"

Omogući usluge:

systemctl enable docker

systemctl enable flanneld

systemctl enable kubelet

systemctl enable kube-proxy

Ponovno pokrenite poslužitelj.

Testirajte svoj Kubernetes poslužitelj

Nakon što se svi poslužitelji ponovno pokrenu, provjerite radi li vaš Kubernetes klaster:

[root@kube-master ~]# kubectl get nodes

NAME LABELS STATUS

kube-node1 kubernetes.io/hostname=kube-node1 Ready

kube-node2 kubernetes.io/hostname=kube-node2 Ready

Primjer: postavljanje Selenium mreže pomoću Kubernetesa

Selenium je okvir za automatizaciju preglednika za potrebe testiranja. To je moćan alat iz arsenala bilo kojeg web developera.

Selenium grid omogućuje skalabilno i paralelno daljinsko izvođenje testova u klasteru Selenium čvorova koji su povezani sa središnjim Selenium čvorištem.

Since Selenium nodes are stateless themselves and the amount of nodes we run is flexible, depending on our testing workloads, this is a perfect candidate application to be deployed on a Kubernetes cluster.

In the next section, we'll deploy a grid consisting of 5 application containers:

- 1 central Selenium hub that will be the remote endpoint to which our tests will connect.

- 2 Selenium nodes running Firefox.

- 2 Selenium nodes running Chrome.

Deployment strategy

To automatically manage replication and self-healing, we'll create a Kubernetes replication controller for each type of application container we listed above.

To provide developers who are running tests with a stable Selenium hub endpoint, we'll create a Kubernetes service connected to the hub replication controller.

Selenium hub

Replication controller

# selenium-hub-rc.yaml

apiVersion: v1

kind: ReplicationController

metadata:

name: selenium-hub

spec:

replicas: 1

selector:

name: selenium-hub

template:

metadata:

labels:

name: selenium-hub

spec:

containers:

- name: selenium-hub

image: selenium/hub

ports:

- containerPort: 4444

Deployment:

[root@kube-master ~]# kubectl create -f selenium-hub-rc.yaml

replicationcontrollers/selenium-hub

[root@kube-master ~]# kubectl get rc

CONTROLLER CONTAINER(S) IMAGE(S) SELECTOR REPLICAS

selenium-hub selenium-hub selenium/hub name=selenium-hub 1

[root@kube-master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

selenium-hub-pilc8 1/1 Running 0 50s

[root@kube-master ~]# kubectl describe pod selenium-hub-pilc8

Name: selenium-hub-pilc8

Namespace: default

Image(s): selenium/hub

Node: kube-node2/45.63.16.92

Labels: name=selenium-hub

Status: Running

Reason:

Message:

IP: 10.20.101.2

Replication Controllers: selenium-hub (1/1 replicas created)

Containers:

selenium-hub:

Image: selenium/hub

State: Running

Started: Sat, 24 Oct 2015 16:01:39 +0000

Ready: True

Restart Count: 0

Conditions:

Type Status

Ready True

Events:

FirstSeen LastSeen Count From SubobjectPath Reason Message

Sat, 24 Oct 2015 16:01:02 +0000 Sat, 24 Oct 2015 16:01:02 +0000 1 {scheduler } scheduled Successfully assigned selenium-hub-pilc8 to kube-node2

Sat, 24 Oct 2015 16:01:05 +0000 Sat, 24 Oct 2015 16:01:05 +0000 1 {kubelet kube-node2} implicitly required container POD pulled Successfully pulled Pod container image "gcr.io/google_containers/pause:0.8.0"

Sat, 24 Oct 2015 16:01:05 +0000 Sat, 24 Oct 2015 16:01:05 +0000 1 {kubelet kube-node2} implicitly required container POD created Created with docker id 6de00106b19c

Sat, 24 Oct 2015 16:01:05 +0000 Sat, 24 Oct 2015 16:01:05 +0000 1 {kubelet kube-node2} implicitly required container POD started Started with docker id 6de00106b19c

Sat, 24 Oct 2015 16:01:39 +0000 Sat, 24 Oct 2015 16:01:39 +0000 1 {kubelet kube-node2} spec.containers pulled Successfully pulled image "selenium/hub"

Sat, 24 Oct 2015 16:01:39 +0000 Sat, 24 Oct 2015 16:01:39 +0000 1 {kubelet kube-node2} spec.containers created Created with docker id 7583cc09268c

Sat, 24 Oct 2015 16:01:39 +0000 Sat, 24 Oct 2015 16:01:39 +0000 1 {kubelet kube-node2} spec.containers started Started with docker id 7583cc09268c

Ovdje možemo vidjeti da je Kubernetes postavio moj selen-hub kontejner na kube-node2.

Servis

# selenium-hub-service.yaml

apiVersion: v1

kind: Service

metadata:

name: selenium-hub

spec:

type: NodePort

ports:

- port: 4444

protocol: TCP

nodePort: 30000

selector:

name: selenium-hub

implementacija:

[root@kube-master ~]# kubectl create -f selenium-hub-service.yaml

You have exposed your service on an external port on all nodes in your

cluster. If you want to expose this service to the external internet, you may

need to set up firewall rules for the service port(s) (tcp:30000) to serve traffic.

See http://releases.k8s.io/HEAD/docs/user-guide/services-firewalls.md for more details.

services/selenium-hub

[root@kube-master ~]# kubectl get services

NAME LABELS SELECTOR IP(S) PORT(S)

kubernetes component=apiserver,provider=kubernetes <none> 10.254.0.1 443/TCP

selenium-hub <none> name=selenium-hub 10.254.124.73 4444/TCP

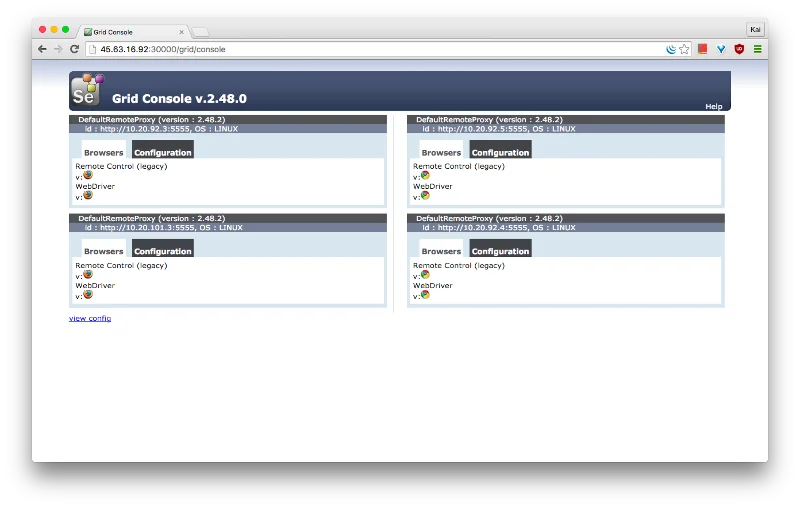

Nakon implementacije usluge, bit će dostupna sa:

- Bilo koji Kubernetes čvor, preko virtualnog IP-a 10.254.124.73 i porta 4444.

- Vanjske mreže, putem javnih IP-ova bilo kojeg Kubernetes čvora, na portu 30000.

![Početak rada s Kubernetesom na CentOS-u 7 Početak rada s Kubernetesom na CentOS-u 7]() (koristeći javni IP drugog Kubernetes čvora)

(koristeći javni IP drugog Kubernetes čvora)

Selenski čvorovi

Kontroler replikacije čvora Firefox:

# selenium-node-firefox-rc.yaml

apiVersion: v1

kind: ReplicationController

metadata:

name: selenium-node-firefox

spec:

replicas: 2

selector:

name: selenium-node-firefox

template:

metadata:

labels:

name: selenium-node-firefox

spec:

containers:

- name: selenium-node-firefox

image: selenium/node-firefox

ports:

- containerPort: 5900

env:

- name: HUB_PORT_4444_TCP_ADDR

value: "replace_with_service_ip"

- name: HUB_PORT_4444_TCP_PORT

value: "4444"

implementacija:

Zamijenite replace_with_service_ipu selenium-node-firefox-rc.yamlsa stvarnim Selen koncentrator IP usluga, u ovom slučaju 10.254.124.73.

[root@kube-master ~]# kubectl create -f selenium-node-firefox-rc.yaml

replicationcontrollers/selenium-node-firefox

[root@kube-master ~]# kubectl get rc

CONTROLLER CONTAINER(S) IMAGE(S) SELECTOR REPLICAS

selenium-hub selenium-hub selenium/hub name=selenium-hub 1

selenium-node-firefox selenium-node-firefox selenium/node-firefox name=selenium-node-firefox 2

[root@kube-master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

selenium-hub-pilc8 1/1 Running 1 1h

selenium-node-firefox-lc6qt 1/1 Running 0 2m

selenium-node-firefox-y9qjp 1/1 Running 0 2m

[root@kube-master ~]# kubectl describe pod selenium-node-firefox-lc6qt

Name: selenium-node-firefox-lc6qt

Namespace: default

Image(s): selenium/node-firefox

Node: kube-node2/45.63.16.92

Labels: name=selenium-node-firefox

Status: Running

Reason:

Message:

IP: 10.20.101.3

Replication Controllers: selenium-node-firefox (2/2 replicas created)

Containers:

selenium-node-firefox:

Image: selenium/node-firefox

State: Running

Started: Sat, 24 Oct 2015 17:08:37 +0000

Ready: True

Restart Count: 0

Conditions:

Type Status

Ready True

Events:

FirstSeen LastSeen Count From SubobjectPath Reason Message

Sat, 24 Oct 2015 17:08:13 +0000 Sat, 24 Oct 2015 17:08:13 +0000 1 {scheduler } scheduled Successfully assigned selenium-node-firefox-lc6qt to kube-node2

Sat, 24 Oct 2015 17:08:13 +0000 Sat, 24 Oct 2015 17:08:13 +0000 1 {kubelet kube-node2} implicitly required container POD pulled Pod container image "gcr.io/google_containers/pause:0.8.0" already present on machine

Sat, 24 Oct 2015 17:08:13 +0000 Sat, 24 Oct 2015 17:08:13 +0000 1 {kubelet kube-node2} implicitly required container POD created Created with docker id cdcb027c6548

Sat, 24 Oct 2015 17:08:13 +0000 Sat, 24 Oct 2015 17:08:13 +0000 1 {kubelet kube-node2} implicitly required container POD started Started with docker id cdcb027c6548

Sat, 24 Oct 2015 17:08:36 +0000 Sat, 24 Oct 2015 17:08:36 +0000 1 {kubelet kube-node2} spec.containers pulled Successfully pulled image "selenium/node-firefox"

Sat, 24 Oct 2015 17:08:36 +0000 Sat, 24 Oct 2015 17:08:36 +0000 1 {kubelet kube-node2} spec.containers created Created with docker id 8931b7f7a818

Sat, 24 Oct 2015 17:08:37 +0000 Sat, 24 Oct 2015 17:08:37 +0000 1 {kubelet kube-node2} spec.containers started Started with docker id 8931b7f7a818

[root@kube-master ~]# kubectl describe pod selenium-node-firefox-y9qjp

Name: selenium-node-firefox-y9qjp

Namespace: default

Image(s): selenium/node-firefox

Node: kube-node1/185.92.221.67

Labels: name=selenium-node-firefox

Status: Running

Reason:

Message:

IP: 10.20.92.3

Replication Controllers: selenium-node-firefox (2/2 replicas created)

Containers:

selenium-node-firefox:

Image: selenium/node-firefox

State: Running

Started: Sat, 24 Oct 2015 17:08:13 +0000

Ready: True

Restart Count: 0

Conditions:

Type Status

Ready True

Events:

FirstSeen LastSeen Count From SubobjectPath Reason Message

Sat, 24 Oct 2015 17:08:13 +0000 Sat, 24 Oct 2015 17:08:13 +0000 1 {scheduler } scheduled Successfully assigned selenium-node-firefox-y9qjp to kube-node1

Sat, 24 Oct 2015 17:08:13 +0000 Sat, 24 Oct 2015 17:08:13 +0000 1 {kubelet kube-node1} implicitly required container POD pulled Pod container image "gcr.io/google_containers/pause:0.8.0" already present on machine

Sat, 24 Oct 2015 17:08:13 +0000 Sat, 24 Oct 2015 17:08:13 +0000 1 {kubelet kube-node1} implicitly required container POD created Created with docker id ea272dd36bd5

Sat, 24 Oct 2015 17:08:13 +0000 Sat, 24 Oct 2015 17:08:13 +0000 1 {kubelet kube-node1} implicitly required container POD started Started with docker id ea272dd36bd5

Sat, 24 Oct 2015 17:08:13 +0000 Sat, 24 Oct 2015 17:08:13 +0000 1 {kubelet kube-node1} spec.containers created Created with docker id 6edbd6b9861d

Sat, 24 Oct 2015 17:08:13 +0000 Sat, 24 Oct 2015 17:08:13 +0000 1 {kubelet kube-node1} spec.containers started Started with docker id 6edbd6b9861d

Kao što vidimo, Kubernetes je napravio 2 replike selenium-firefox-nodei distribuirao ih po cijelom klasteru. Pod selenium-node-firefox-lc6qtje na kube-čvoru2, dok je pod selenium-node-firefox-y9qjpna kube-čvoru1.

Ponavljamo isti postupak za naše Selenium Chrome čvorove.

Kontroler replikacije čvora Chrome:

# selenium-node-chrome-rc.yaml

apiVersion: v1

kind: ReplicationController

metadata:

name: selenium-node-chrome

labels:

app: selenium-node-chrome

spec:

replicas: 2

selector:

app: selenium-node-chrome

template:

metadata:

labels:

app: selenium-node-chrome

spec:

containers:

- name: selenium-node-chrome

image: selenium/node-chrome

ports:

- containerPort: 5900

env:

- name: HUB_PORT_4444_TCP_ADDR

value: "replace_with_service_ip"

- name: HUB_PORT_4444_TCP_PORT

value: "4444"

implementacija:

[root@kube-master ~]# kubectl create -f selenium-node-chrome-rc.yaml

replicationcontrollers/selenium-node-chrome

[root@kube-master ~]# kubectl get rc

CONTROLLER CONTAINER(S) IMAGE(S) SELECTOR REPLICAS

selenium-hub selenium-hub selenium/hub name=selenium-hub 1

selenium-node-chrome selenium-node-chrome selenium/node-chrome app=selenium-node-chrome 2

selenium-node-firefox selenium-node-firefox selenium/node-firefox name=selenium-node-firefox 2

[root@kube-master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

selenium-hub-pilc8 1/1 Running 1 1h

selenium-node-chrome-9u1ld 1/1 Running 0 1m

selenium-node-chrome-mgi52 1/1 Running 0 1m

selenium-node-firefox-lc6qt 1/1 Running 0 11m

selenium-node-firefox-y9qjp 1/1 Running 0 11m

Završavati

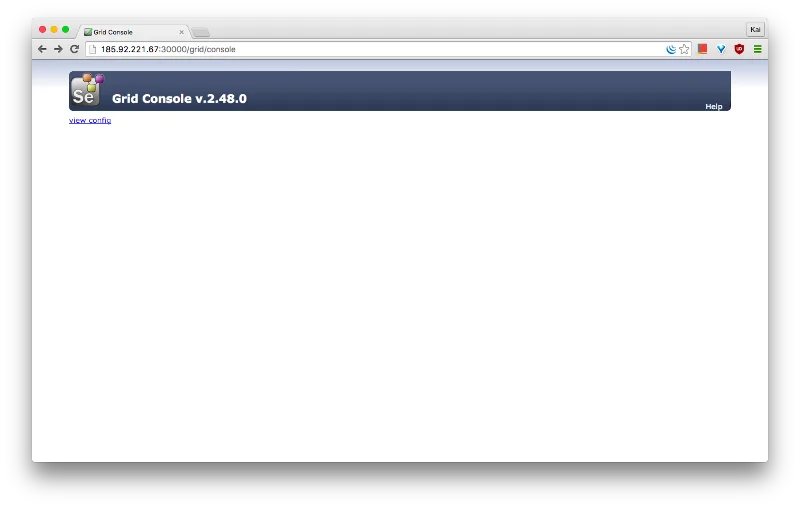

U ovom vodiču postavili smo mali Kubernetes klaster od 3 poslužitelja (1 glavni kontroler + 2 radnika).

Koristeći podove, RC-ove i uslugu, uspješno smo implementirali Selenium Grid koji se sastoji od središnjeg čvorišta i 4 čvora, omogućujući programerima da istovremeno pokreću 4 Selenium testa na klasteru.

Kubernetes je automatski rasporedio spremnike u cijelom klasteru.

![Početak rada s Kubernetesom na CentOS-u 7 Početak rada s Kubernetesom na CentOS-u 7]()

Samoizlječenje

Kubernetes automatski reprogramira podove na zdrave poslužitelje ako se jedan ili više naših poslužitelja pokvari. U mom primjeru, kube-node2 trenutno pokreće Selenium hub pod i 1 Selenium Firefox node pod.

[root@kube-node2 ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

5617399f146c selenium/node-firefox "/opt/bin/entry_poin 5 minutes ago Up 5 minutes k8s_selenium-node-firefox.46e635d8_selenium-node-firefox-zmj1r_default_31c89517-7a75-11e5-8648-5600001611e0_baae8e00

185230a3b431 gcr.io/google_containers/pause:0.8.0 "/pause" 5 minutes ago Up 5 minutes k8s_POD.3805e8b7_selenium-node-firefox-zmj1r_default_31c89517-7a75-11e5-8648-5600001611e0_40f809df

fdd5834c249d selenium/hub "/opt/bin/entry_poin About an hour ago Up About an hour k8s_selenium-hub.cb8bf0ed_selenium-hub-pilc8_default_6c98c1ff-7a68-11e5-8648-5600001611e0_5765e2c9

00e4ccb0bda8 gcr.io/google_containers/pause:0.8.0 "/pause" About an hour ago Up About an hour k8s_POD.3b3ee8b9_selenium-hub-pilc8_default_6c98c1ff-7a68-11e5-8648-5600001611e0_8398ac33

Simulirati ćemo neuspjeh poslužitelja gašenjem kube-node2. Nakon nekoliko minuta, trebali biste vidjeti da su spremnici koji su radili na kube-node2 preraspoređeni na kube-node1, osiguravajući minimalan prekid usluge.

[root@kube-node1 ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

5bad5f582698 selenium/hub "/opt/bin/entry_poin 19 minutes ago Up 19 minutes k8s_selenium-hub.cb8bf0ed_selenium-hub-hycf2_default_fe9057cf-7a76-11e5-8648-5600001611e0_ccaad50a

dd1565a94919 selenium/node-firefox "/opt/bin/entry_poin 20 minutes ago Up 20 minutes k8s_selenium-node-firefox.46e635d8_selenium-node-firefox-g28z5_default_fe932673-7a76-11e5-8648-5600001611e0_fc79f977

2be1a316aa47 gcr.io/google_containers/pause:0.8.0 "/pause" 20 minutes ago Up 20 minutes k8s_POD.3805e8b7_selenium-node-firefox-g28z5_default_fe932673-7a76-11e5-8648-5600001611e0_dc204ad2

da75a0242a9e gcr.io/google_containers/pause:0.8.0 "/pause" 20 minutes ago Up 20 minutes k8s_POD.3b3ee8b9_selenium-hub-hycf2_default_fe9057cf-7a76-11e5-8648-5600001611e0_1b10c0e7

c611b68330de selenium/node-firefox "/opt/bin/entry_poin 33 minutes ago Up 33 minutes k8s_selenium-node-firefox.46e635d8_selenium-node-firefox-8ylo2_default_31c8a8f3-7a75-11e5-8648-5600001611e0_922af821

828031da6b3c gcr.io/google_containers/pause:0.8.0 "/pause" 33 minutes ago Up 33 minutes k8s_POD.3805e8b7_selenium-node-firefox-8ylo2_default_31c8a8f3-7a75-11e5-8648-5600001611e0_289cd555

caf4e725512e selenium/node-chrome "/opt/bin/entry_poin 46 minutes ago Up 46 minutes k8s_selenium-node-chrome.362a34ee_selenium-node-chrome-mgi52_default_392a2647-7a73-11e5-8648-5600001611e0_3c6e855a

409a20770787 selenium/node-chrome "/opt/bin/entry_poin 46 minutes ago Up 46 minutes k8s_selenium-node-chrome.362a34ee_selenium-node-chrome-9u1ld_default_392a15a4-7a73-11e5-8648-5600001611e0_ac3f0191

7e2d942422a5 gcr.io/google_containers/pause:0.8.0 "/pause" 47 minutes ago Up 47 minutes k8s_POD.3805e8b7_selenium-node-chrome-9u1ld_default_392a15a4-7a73-11e5-8648-5600001611e0_f5858b73

a3a65ea99a99 gcr.io/google_containers/pause:0.8.0 "/pause" 47 minutes ago Up 47 minutes k8s_POD.3805e8b7_selenium-node-chrome-mgi52_default_392a2647-7a73-11e5-8648-5600001611e0_20a70ab6

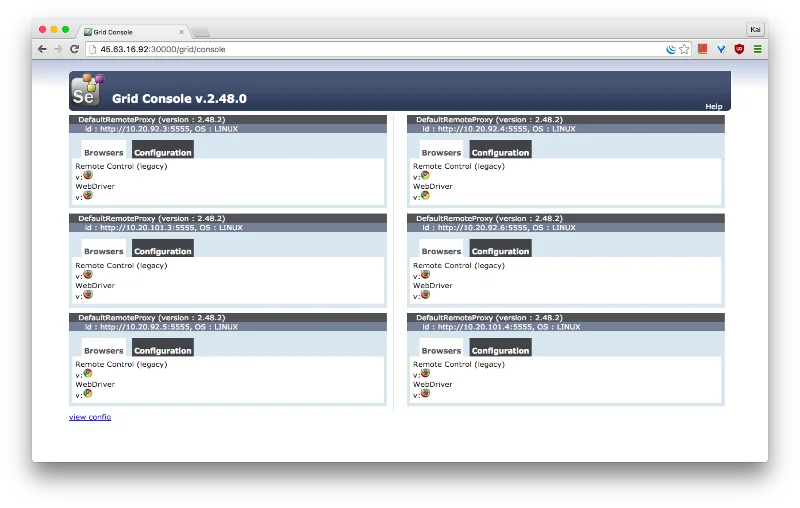

Skaliranje vaše Selenium Grid

Skaliranje vaše Selenium Grid je super jednostavno uz Kubernetes. Zamislite da bih umjesto 2 Firefox čvora želio pokrenuti 4. Povećanje se može izvršiti jednom naredbom:

[root@kube-master ~]# kubectl scale rc selenium-node-firefox --replicas=4

scaled

[root@kube-master ~]# kubectl get rc

CONTROLLER CONTAINER(S) IMAGE(S) SELECTOR REPLICAS

selenium-hub selenium-hub selenium/hub name=selenium-hub 1

selenium-node-chrome selenium-node-chrome selenium/node-chrome app=selenium-node-chrome 2

selenium-node-firefox selenium-node-firefox selenium/node-firefox name=selenium-node-firefox 4

[root@kube-master ~]# kubectl get pods

NAME READY STATUS RESTARTS AGE

selenium-hub-pilc8 1/1 Running 1 1h

selenium-node-chrome-9u1ld 1/1 Running 0 14m

selenium-node-chrome-mgi52 1/1 Running 0 14m

selenium-node-firefox-8ylo2 1/1 Running 0 40s

selenium-node-firefox-lc6qt 1/1 Running 0 24m

selenium-node-firefox-y9qjp 1/1 Running 0 24m

selenium-node-firefox-zmj1r 1/1 Running 0 40s

![Početak rada s Kubernetesom na CentOS-u 7 Početak rada s Kubernetesom na CentOS-u 7]()

(koristeći javni IP drugog Kubernetes čvora)

(koristeći javni IP drugog Kubernetes čvora)